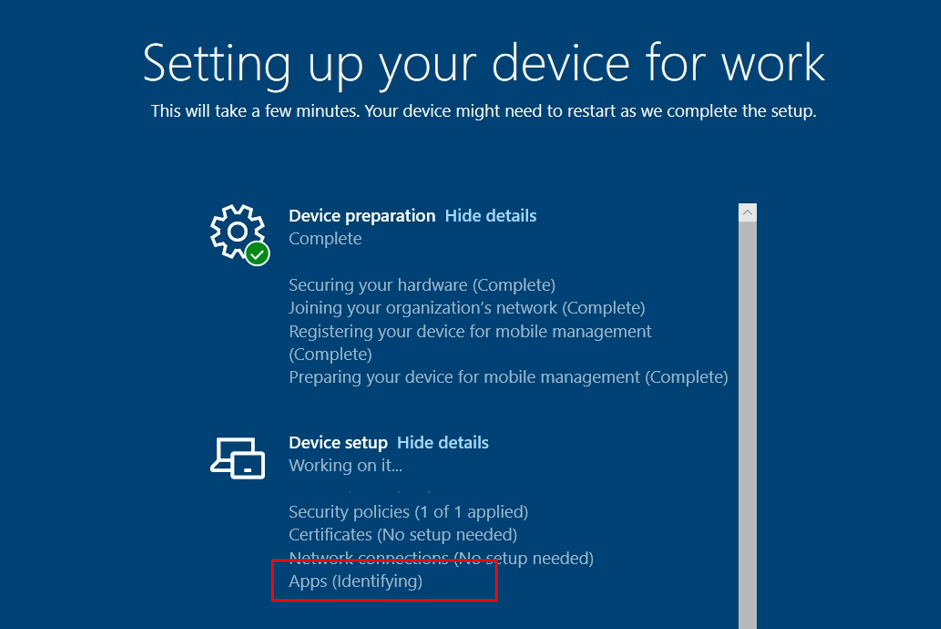

This blog will show how a broken PowerShell script could cause a 30-minute delay in your Enrollment Status Page (ESP) when enrolling a device with Windows Autopilot Pre-Provisioning. During the device setup phase, I noticed Autopilot stuck on Identifying apps

1. Autopilot stuck on Identifying apps

Before showing what exactly broke, let’s start by looking at the issue itself. When I was writing my latest blog, which mentions the fake Autopilot@ and fooUser when using Autopilot for Pre-provisioned deployments, I stumbled upon some weird “Identifying” delay and decided to write a unique blog about it.

After the device preparation ESP phase, the device started the device setup part and needed to start the “Apps Identifying” part.

Please Note: This is a pure AADJ environment, so no evil or weird HAADJ issues here 🙂

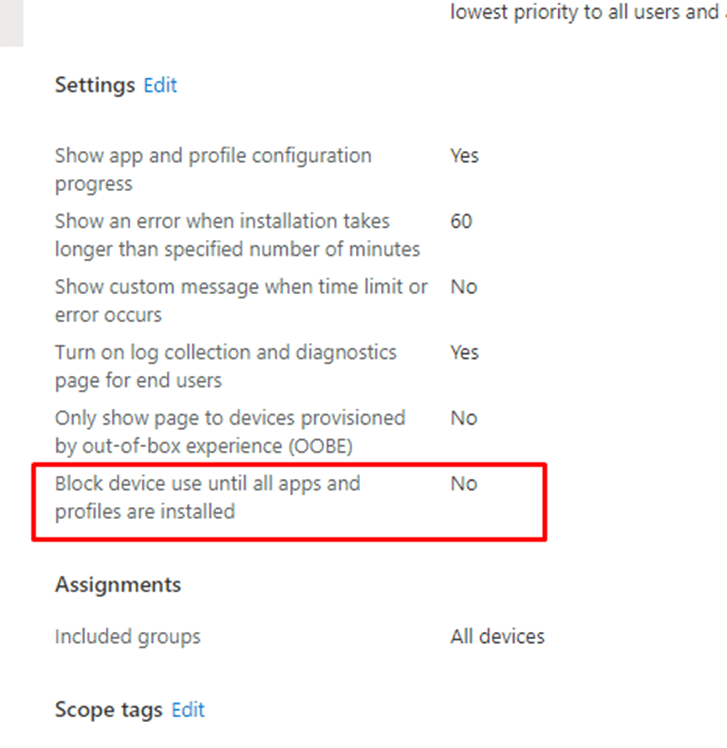

Please Note: This “Apps (Identifying)” issue could also occur when you configured the Enrollment Status page and configured the “Block Device use until all apps and profiles are installed”

This time, it wasn’t failing because of a faulty configured ESP.

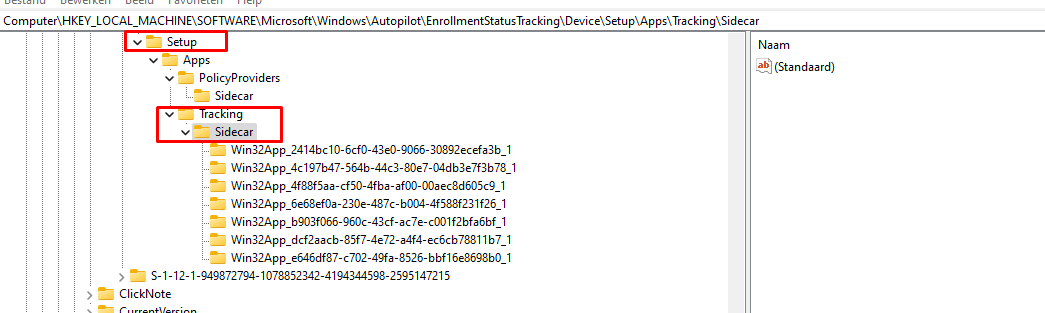

As shown below, the device took its time to identify all of those seven apps that needed to be installed. After waiting exactly 30 minutes the “setup” key was created with all of the Win32 apps in it that needed to be tracked.

HKEY_LOCAL_MACHINE\SOFTWARE\Microsoft\Windows\Autopilot\EnrollmentStatusTracking\Device\Setup\

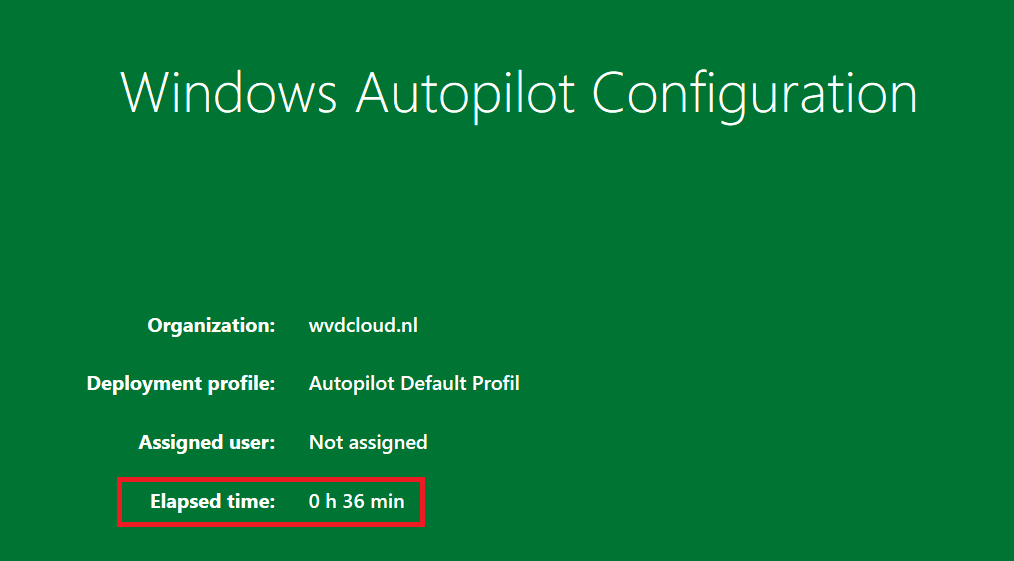

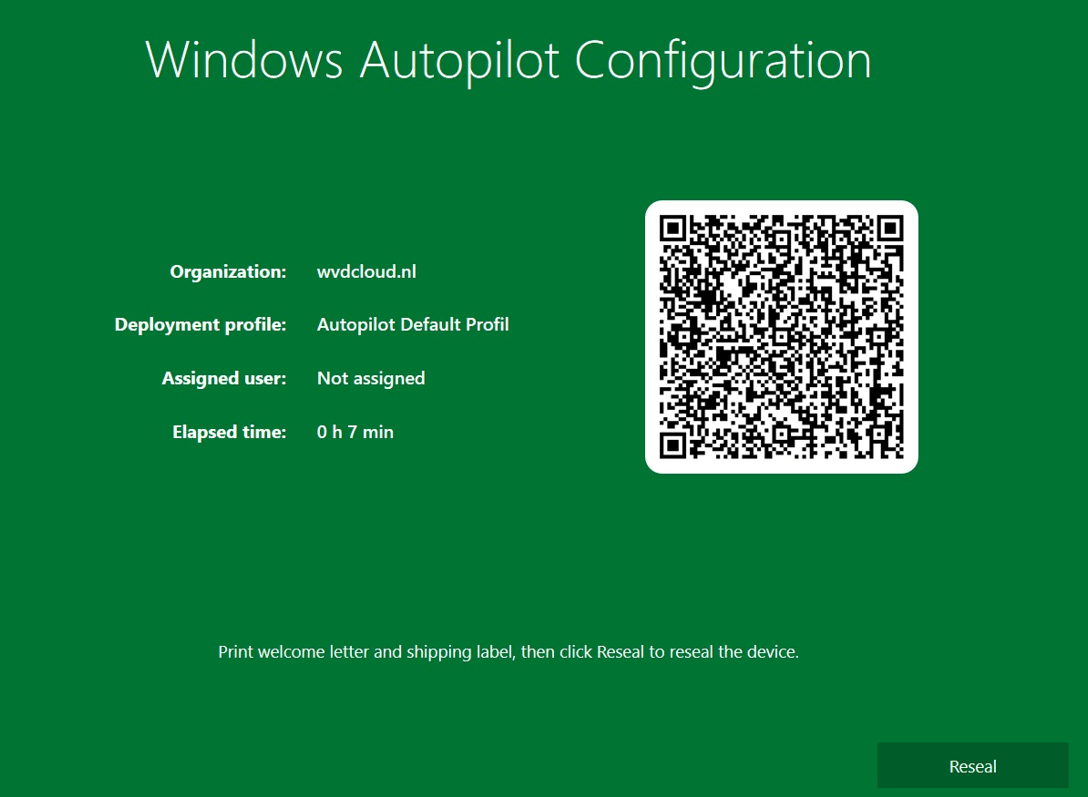

At the same time, that “setup” key was created the device started installing the ESP-required apps. Installing those 7 small apps (Office 365 Apps weren’t included) “only” took about 36 minutes and I was shown a nice green Autopilot Sealing screen!

36 minutes… for a couple of apps… that ain’t right! Let’s dive into it a bit more

2. Troubleshooting Autopilot stuck on Identifying apps

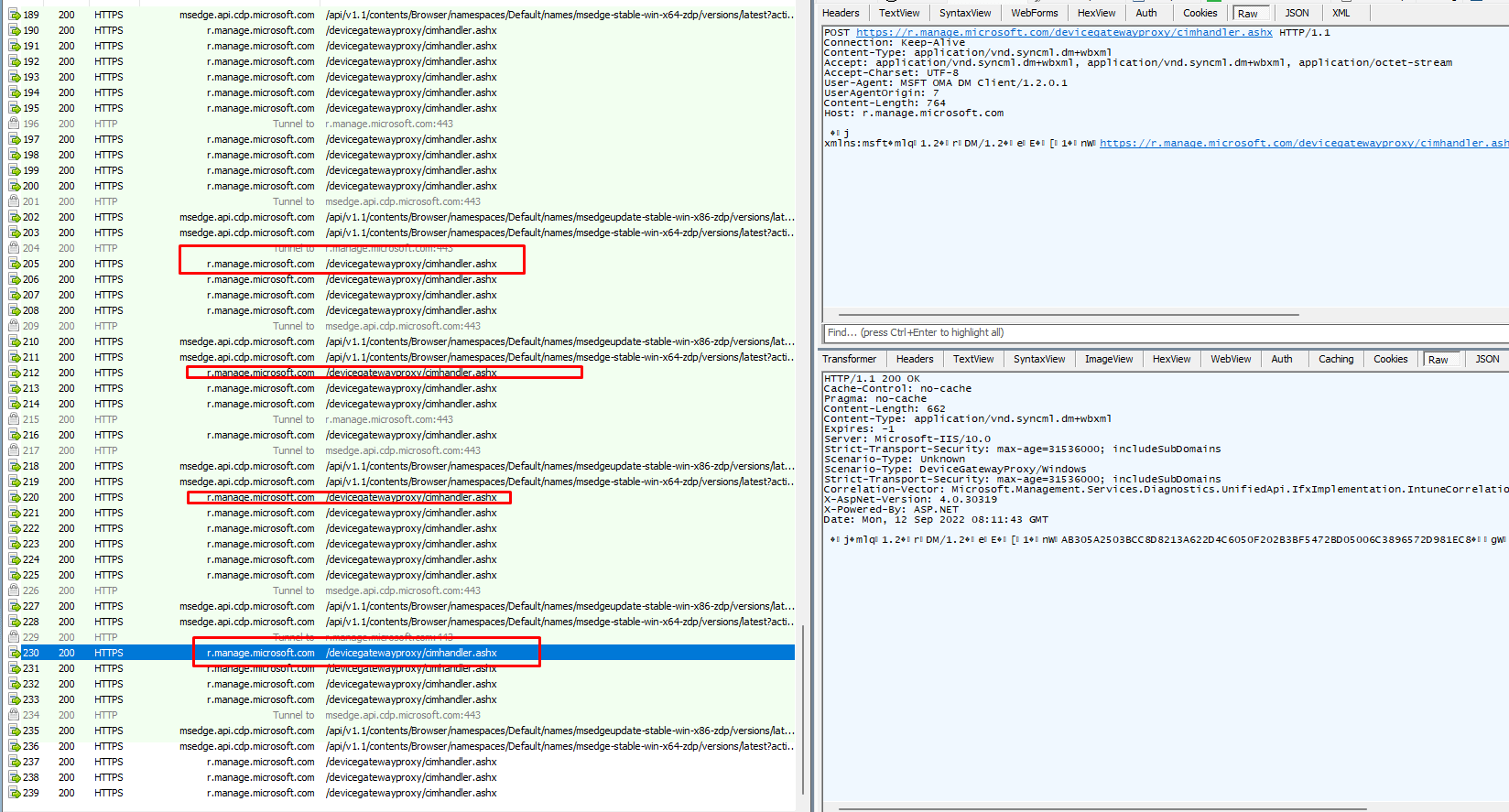

I started troubleshooting the Autopilot stuck on Identifying apps by running an ETL trace. While running the trace, I watched Fiddler go. When taking a closer look at the Fiddler trace, I noticed that almost every minute there was an outbound connection to r.manage.microsoft.com.

Every couple of minutes for about 30 minutes long it sends the same information over and over again and nothing more… That could explain why Autopilot was stuck on Identifying apps for 30 minutes?

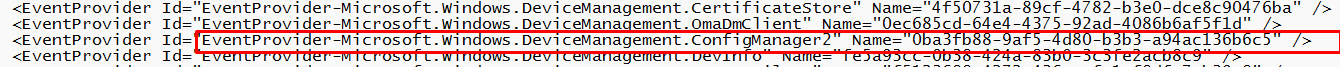

So I needed to take a look at the ETL trace but before I could do so I needed to add some additional providers to my WPRP file

As shown above, I added the configurmanager2 event provider with GUID “0ba3fb88-9af5-4d80-b3b3-a94ac136b6c5” to my WPRP file. Feel free to take a look at it.

https://call4cloud.nl/wp-content/uploads/2022/09/wprp.zip

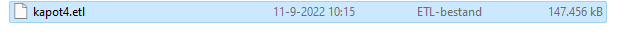

After stopping the trace I noticed that the ETL file was a little bit larger than I expected but hey, I am not complaining. The bigger it is the more information it has right?

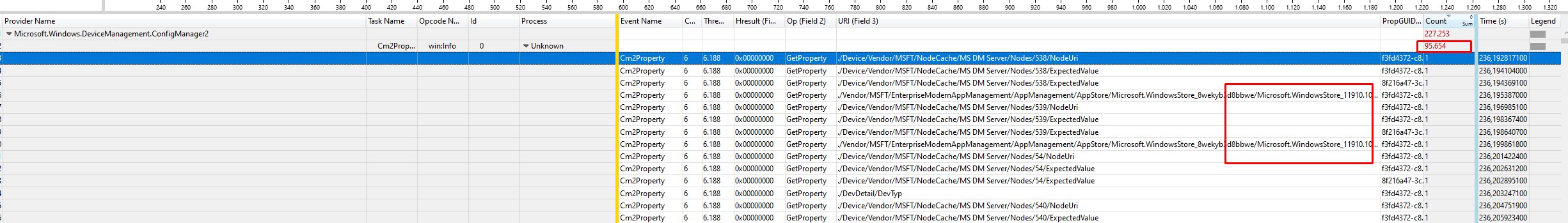

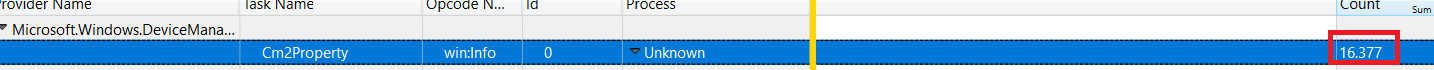

When opening this etl file in the WPA tool I immediately noticed a lot of “counts” in the Microsoft.Windows.DeviceManagement.Configmanager2 provider section

At first, I had the “stupid” idea it was also trying to list all of the Microsoft Store apps that were available before starting with the Autopilot stuck on Identifying apps part but I guess that wasn’t the case! Let me show you why.

Luckily I am keeping an archive of all the traces I performed in the past… Because you can’t have enough of them! Just a couple of days ago I almost did the same thing on the same device, but that time I did NOT use the Autopilot pre-provisioning option but the normal user-based Autopilot enrollment

When opening this user-based Autopilot trace, I am noticing only a count of 16.000 rows instead of 95.000!

So, when comparing those 2, I was pretty sure it was doing “something” that was only occurring when running Autopilot for pre-provisioned deployments .

3. The ESP Flow

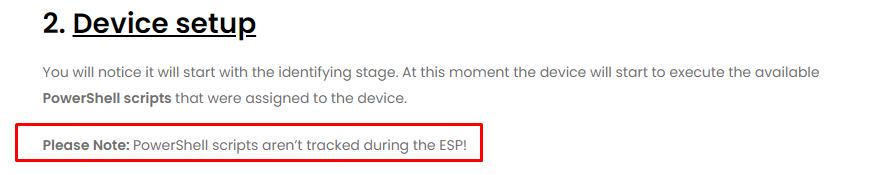

I decided to do something funny. I started reading my own blog about the Enrollment Status (ESP) page, to hopefully spot something because it is “something” that is occurring in the ESP right? I guess I did… I even placed a note about it… Oh my….did I drink too much #membeer?

PowerShell scripts aren’t tracked during the ESP!!! So, something not tracked could give us some issues. But how, as I can’t come up with any reason why the PowerShell scripts could “only” give us issues when performing a pre-provisioning… or??

4. Autopilot stuck on Identifying apps Troubleshooting part 2

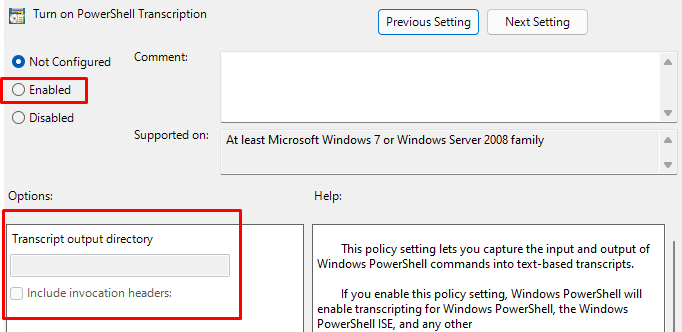

I decided to run another pre-provisioning but this time I am going to add some PowerShell logging! To do so I enabled PowerShell transcription logging and configured the Output directory.

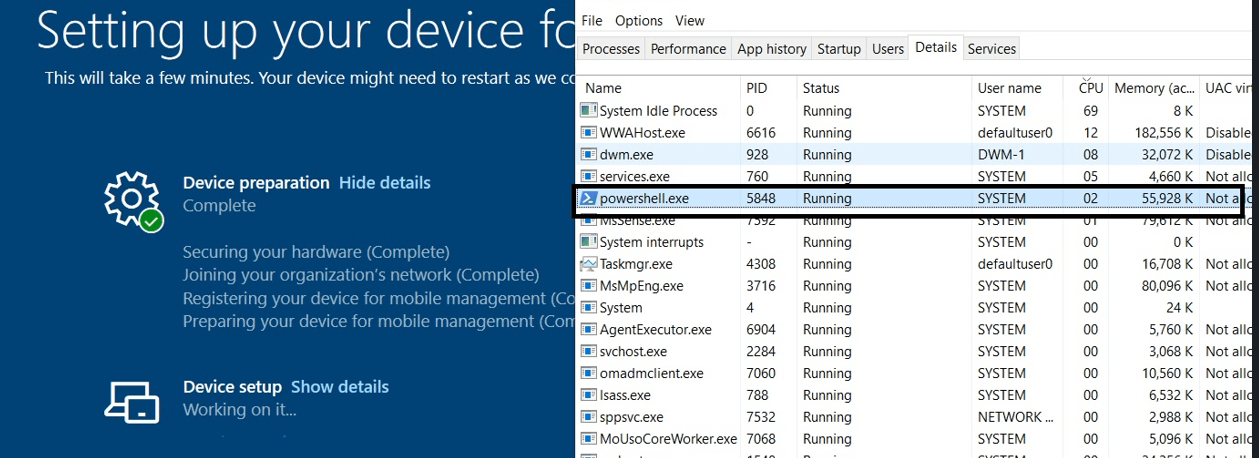

With the PowerShell transcription logging in place, I started the pre-provisioning. Before I am going to show you the output of the transcription log file I also opened the task manager and the Intune Management Extension agentexecutor log file.

At the moment, the ESP was busy “working on it….” I noticed that a PowerShell session in the system context was launched…. But it’s NOT closed! It just stood there and did nothing!

Also, when looking at the resource monitor, I saw no disk activity at all! So I guess we got ourselves a lingering PowerShell session in the system context as it looks like. After closing the PowerShell sessions itself the device continued and started to install the Required Apps. So PowerShell was to blame for Autopilot being stuck on Identifying apps for 30 minutes?

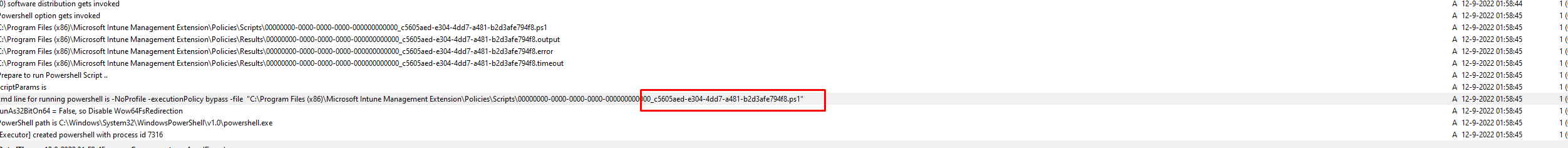

After closing that PowerShell session, I decided to open the AgentExecutor log

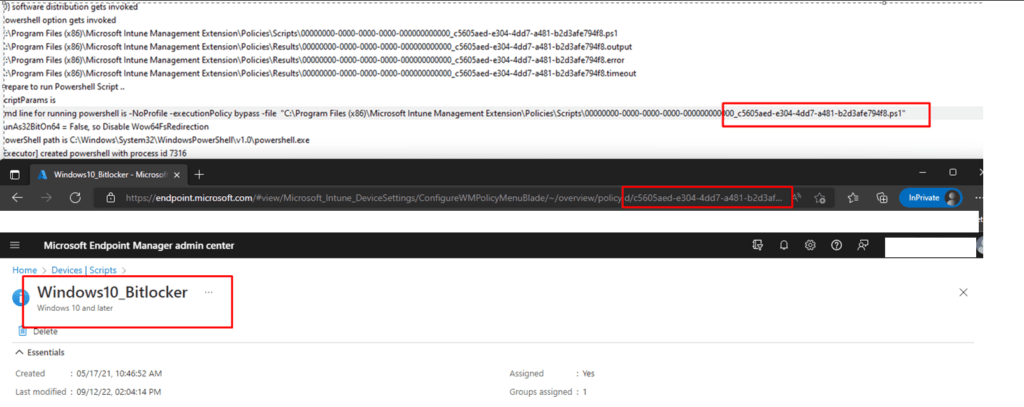

The last line of this log mentioned a specific PowerShell script. It was trying to run. I opened the Endpoint portal and started searching for the ID

As shown below… ow my… that’s the Windows10_Bitlocker script I used when trying to come up with a bonkers solution.

Device Health Attestation Flow | DHA | TPM | PCR | AIK (call4cloud.nl)

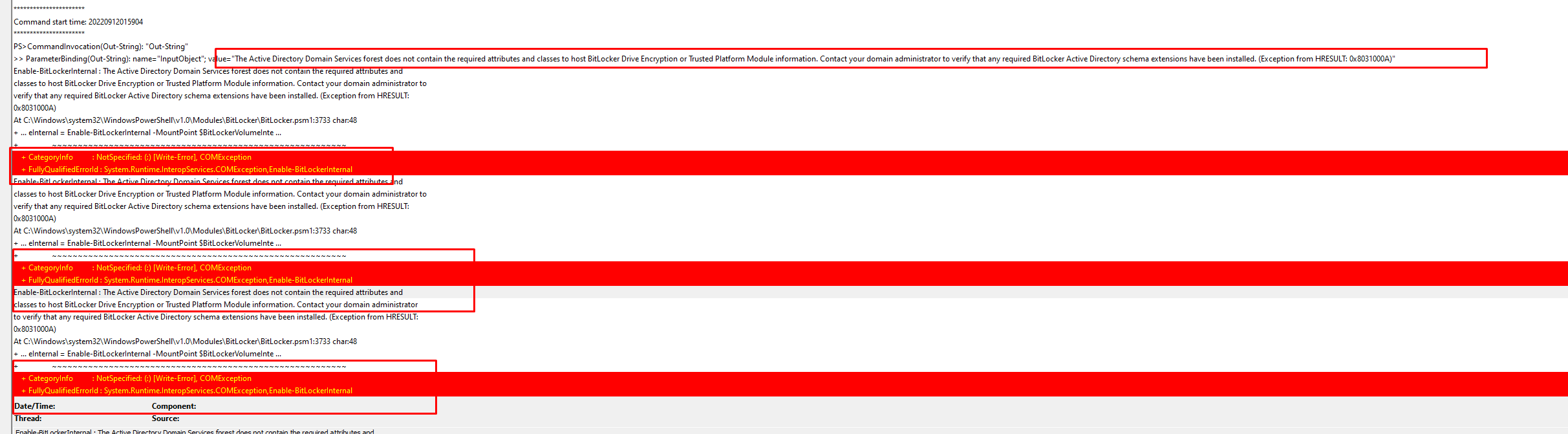

That’s odd because that script should only configure Bitlocker and would try to start encrypting the drive with the specified encryption during the device phase instead of waiting until some user logs in. Let’s continue to the PowerShell transcription log

As shown above, the device can’t be encrypted because the Active Directory Domain Services forest does not contain the required attributes and classes to host Bitlocker Drive Encryption or TPM information. But…. It tries it again, again and again until eternity….

Or when the PowerShell script times out after 30 minutes. Because 30 minutes is exactly the time a PowerShell script deployed to the device will time out. Guess what happens when the PowerShell script times out! Yes… the device will continue with the ESP and will start with the Win32app installations and will start tracking those apps

5. The Autopilot stuck on Identifying apps issue

First, some more explanation before I am going to show you the “ooooooopsssieee”. When you are enrolling a device with Autopilot Pre-Provisioning, the device will be joined to Azure Ad with a fake autopilot@ account, as I showed you in my latest blog.

Autopilot Pre-Provisioning | Technical Flow V2 | Intune (call4cloud.nl)

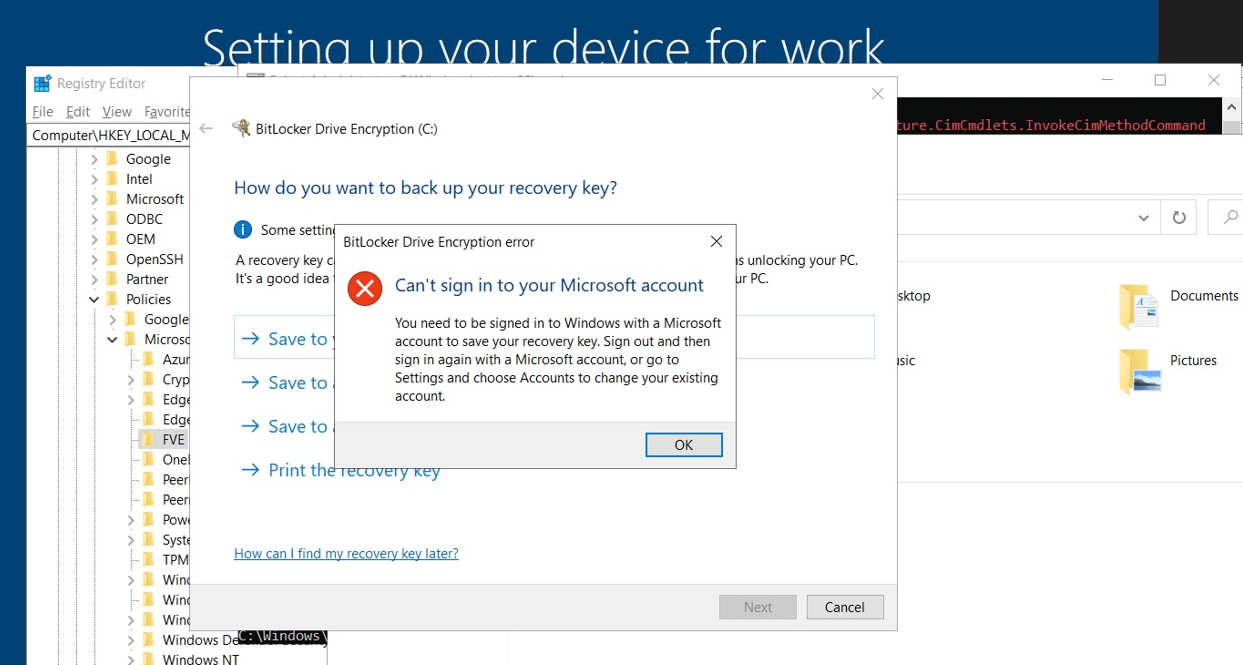

After enrolling into Intune, the Azure device certificate will be whacked, and NO user will be logged in. Guess what doesn’t work when your device isn’t Azure Ad joined anymore and you aren’t logged in with a Microsoft Account?

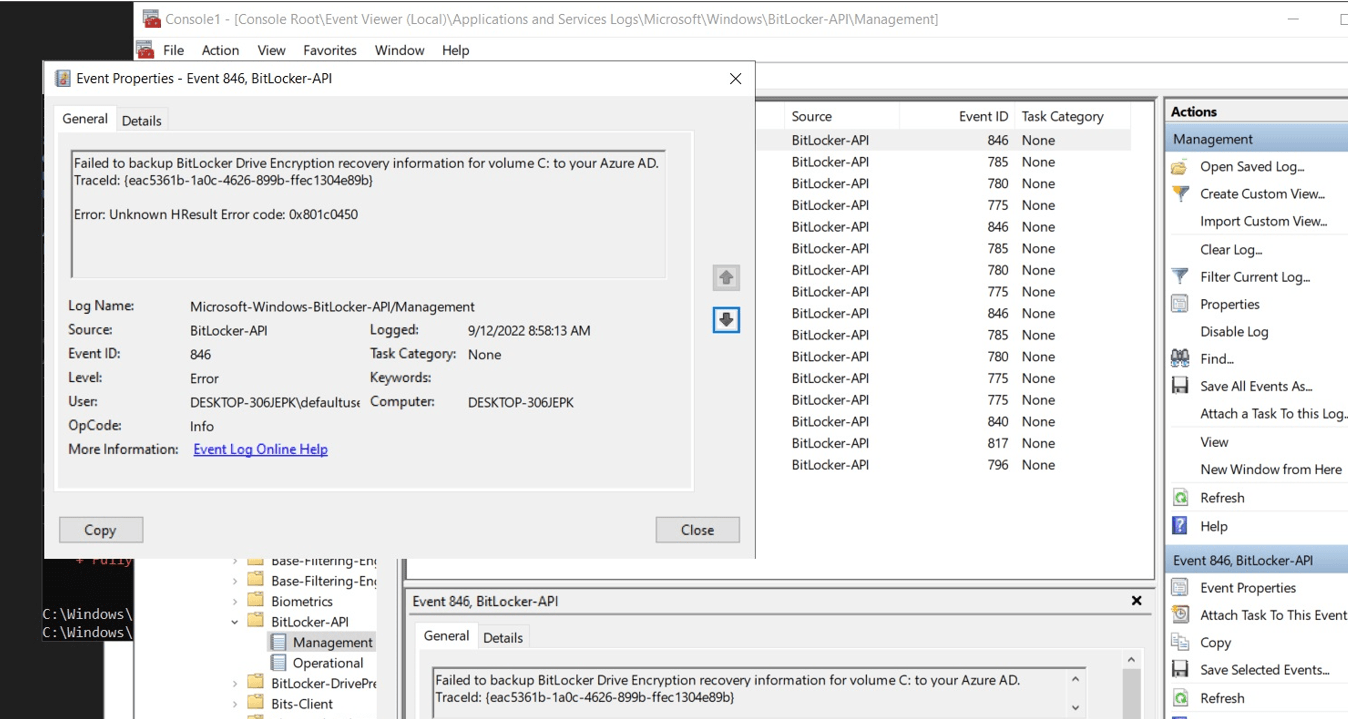

When we have a nice NO longer Azure Ad joined device, and we are not logged in with our Microsoft Account, it’s pretty obvious we can’t back up our Bitlocker Drive Encryption recovery information. You will notice a nice 0x801c0450 error in your Bitlocker-Api event log. Of course, when trying to encrypt the device manually with Bitlocker and upload the key, you will be prompted with a message that you can’t sign in with your Microsoft Account.

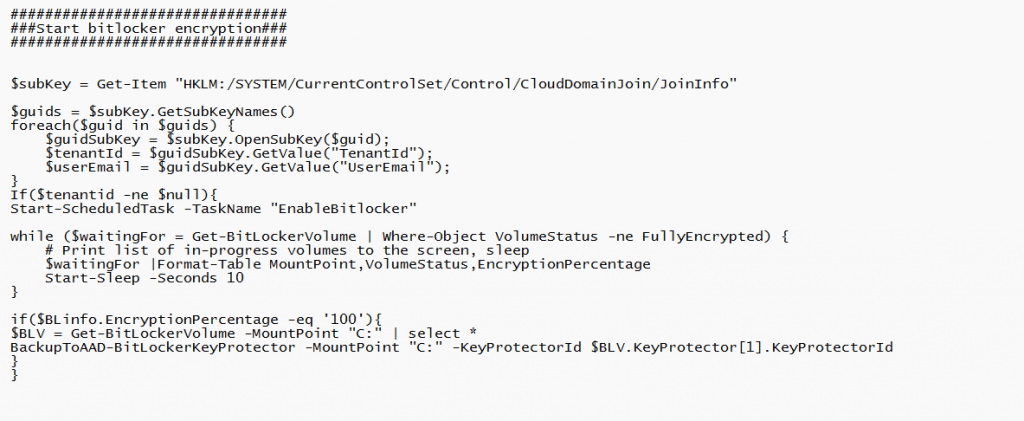

But who cares? Because you would expect that when launching that PowerShell script to encrypt the disk, it will just try to encrypt it and when it fails..uh the script will fail, right? So I decided to fetch back the PowerShell script that was uploaded to Intune by using another PowerShell script

Guess what happened when opening that PowerShell script

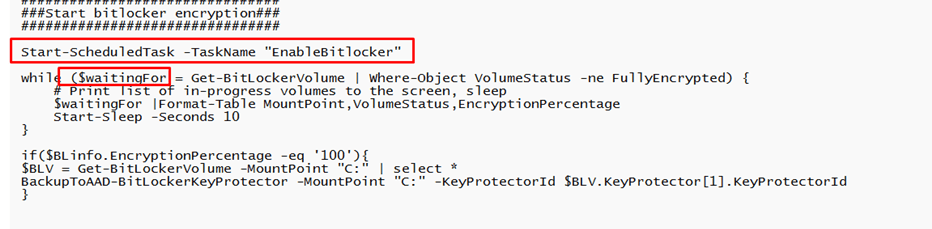

When taking a look at the PowerShell script, I was playing around with an idea to make sure the Bitlocker recovery key is escrowed to Azure

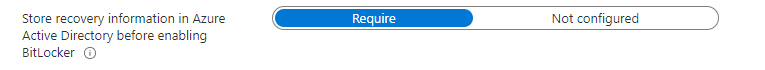

If you combine the Waiting for / While part and the requirement to store recovery information in Azure Active Directory before enabling BitLocker without having an Azure Ad Joined device, guess what happens?

Damn… damn… stupid me! Okay, I didn’t break anything. Sometimes, you try to fix something but end up breaking something else.

I decided to add a couple of lines to the Bitlocker PowerShell script (of course, I could also convert it to a Win32app app or just remove those lines… ). I made sure the script first checks if the device is Azure Ad Joined before trying to encrypt the drive.

After enrolling my device again with Autopilot Pre-Provisioning it only took 7 minutes instead of the 36 minutes we noticed at the start of this dumb blog.

Conclusion

Please be aware that when your device takes 30 minutes to identify the Apps of the Device ESP stage, something is timing out! That “something” could very much be the PowerShell scripts you are deploying to your devices!

Feel free to reach out to me if you are experiencing the same issue and your PowerShell Scripts don’t cause it

But what if the pre-provisioning finishes in 10 minutes with 5 apps, but after sealing the device and assigning it to a user the device phase takes more than 50+ minutes for identifying apps? Nothing happens in the log after starting win32 app inventory collection via WMI and the next line after 17 minutes which says ‘set timer, start the timer for workload powershell and after another 30 minutes ‘co-mgmt features are not available, ex= system.Management.ManagementException, not fatal. Systems are Windows 10 21H2 and pure AAD joined, nothing hybrid?

Hi Rudy,

I have similar issue with the ESP – Device Setup – Application installation steps. In my case we are using the Windows Autopilot HAADJ with pre-provision. All the Apps that are part of ESP are Win32Apps including the O365 (Packaged as Win32Apps).

Issue – Every now and then the application installation takes very long time. I have set the ESP time to 120 minutes of this issue. Sometimes it finishes and get the reseal within 40 minutes or 62 minutes or it timeouts after reaching the 120 minutes. Not sure which application is causing this, everytime i tried to do the autopilot diagnostics it will show different apps.

Also, we are not using any powershell thing for bitlocker side. We have configured the bitlocker policy to enable via Disk encryption settings in Intune portal which will do the encrytion silently.

Any suggestions what is going wrong with my ESP?

Thank you,

Sheetal

Any suggestions on this?

I

Hi… Could be 1 from the 1000 issues… to get to the bottom, you will need to start looking at some logs… or sending me some… How many apps are configured as required in the esp list?

Thanks for your message Rudy.

It is 13 applications (including the Office365 Win32App) which are during the ESP stage. None of them has any dependencies. What logs you need?

Hi Rudy,

I have similar issues in our environment. we are using HAADJ with Win32Apps and it is taking very long time to get the reseal option. Sometimes it finishes in 32 minutes, or 60 minutes or 80 minutes or it times out. In our case we have set to 120 minutes for this reason.

I had a check with my list of applications which are part of ESP and none of them has any powershell script.

For Bitlocker, we use silent encryption which is configured via Intune Endpoint Disk Encryption.

I am not that much familar with the ETL trace or fiddler.

Any suggestions will be really appreicated.

Thank you,

Sheetal

Also seeing a similar behaviour. We have a case open with Check Point FW, if you run without the CP inline ESP runs in approx 45mins. With CP inline it fails after the first app install, hangs around for over an hour and then we see a FIN sent from the workstation. The suspicion is the first app is a script and never gets to install the 2nd app. Some message is not getting back, it should be cut&dry firewall/packet capture analysis but we’re struggling. STu

Hi Rudy, sorry for necroing this thread, but we’ve recently needed to change our BitLocker config from Intune, and we are using the script you reference in part 5.

We have packaged it as a win32 app, and assigned it to all devices. How will the process become after making these changes? We’re in the process of testing an enrollment, but wanted to ask here as well.

Right now we don’t have any BitLocker OS settings in Intune configured, but do everything in the script.

Detection for this script is the existence of the task and some registry keys.

Will this not encrypt the disk during the preprovisioning at all, and wait until the user ESP? Will it fail the application and then retry it again during User ESP?

hi can I kindly suggest doing a few blog posts on the troubleshooting tools you use, how you use them, and how you get them on the device while still in OOBE?

We still have this issue, i have no platform scripts but Bitlocker encryption via Configuration Profile.

Anyone got any ideas, it’s really annoying.

The worst is that after resealing the machine after preprovisioning, at the next start ESP shows up again before user signs in and is identifying apps again, only to realize after many minutes that all the apps are already installed.

Anything to solve this would be appreciated. I have this issue in mutliple tenants…

How did you resolve this issue?

I’m not convinced that some of the behaviour that Rudy shows in the blog is relevant any longer due to changes MS have made under the hood. I have a BitLocker script which successfully kicks of encryption and sets a default PIN (don’t worry, this gets changed later). I now get stuck on identifying everything, and I believe the reason behind this is because the drive encryption has kicked off too early in part of the device preparation stage. I think this is then interfering with device setup. I am still testing work arounds, but the method I am using now is to detect the reg key of an application that gets installed on the ESP. Only once this app is installed, will the drive encrypt. I don’t know if this is going to work… but it is all I can think of that is happening as to the problems I am seeing.